How I Got Gemma 4 e:2B to Talk Like a Pirate

A journal of fine-tuning Google's Gemma 4 e:2B model using LoRA on an Apple Silicon Mac Mini.

- Hardware: Mac Mini M4 (4P+6E cores), 24GB unified RAM, 460GB SSD

- Base model: Gemma 4 e:2B instruction-tuned, 4-bit quantized

- Framework: Apple MLX (

- Goal: Make it talk like a pirate. Every response. Arr.

- Python: 3.13.12 via Homebrew — system Python 3.9.6 is too old for ML work

- ML packages installed: None. Starting from scratch.

- Disk space: 279GB free — plenty

- HuggingFace token: None (turns out we didn't need one!)

- ollama: Running with gemma4:e2b already pulled (7.2GB GGUF)

- MLX runs natively on Apple Silicon Metal GPU — no CUDA needed

- Built-in LoRA fine-tuning with

mlx_lm loracommand bitsandbytes4-bit quantization doesn't work on MPS anyway, which defeats the whole point of QLoRA on a Mac- Simpler pipeline with fewer dependencies

- Unified memory means the GPU can use all 24GB — no copying between CPU/GPU RAM

mlx-lm)

Environment Reconnaissance

Timestamp: 2026-04-06 10:04

Checked what we're working with:

Key decision: MLX over PyTorch. I chose Apple's MLX framework (mlx-lm) instead of the traditional PyTorch + PEFT + bitsandbytes stack. Reasons:

Step 1: Python Environment Setup

Timestamp: 2026-04-06 10:04

python3.13 -m venv pirate-venv

source pirate-venv/bin/activate

pip install mlx-lm huggingface_hubInstalled: mlx 0.31.1, mlx-lm 0.31.1, mlx-metal 0.31.1, transformers 5.5.0, huggingface_hub 1.9.0, and dependencies. Total venv size: ~900MB.

Step 2: Model Selection

Timestamp: 2026-04-06 10:05

Searched HuggingFace for MLX-format Gemma 4 2B models. Found mlx-community/gemma-4-e2b-it-4bit — 15K downloads, ungated (Apache 2.0 license), no HF token needed. This was a relief since we had no token set up.

Downloaded: 3.4GB, took ~30 seconds.

Snag #1: mlx-lm didn't support Gemma 4

The released version (0.31.1) didn't have a gemma4 model module — only up to gemma3. Gemma 4 is brand new.

Fix: Installed from GitHub HEAD:

pip install 'mlx-lm @ git+https://github.com/ml-explore/mlx-lm.git'This got us 0.31.2 which included gemma4.py and gemma4_text.py. Crisis averted.

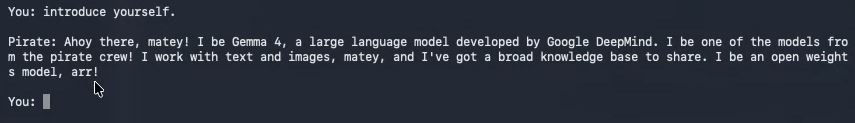

Base model test (pre-training sanity check)

Q: What is the capital of France?

A: The capital of France is **Paris**.Normal, boring, not a pirate. Time to fix that.

Step 3: Creating the Pirate Dataset

Timestamp: 2026-04-06 10:05

Created 54 hand-crafted conversation examples covering diverse topics — all with pirate-speak responses. The dataset includes:

- Greetings and casual chat

- Science (photosynthesis, gravity, DNA, black holes)

- Math (arithmetic, Pythagorean theorem, pi)

- Cooking recipes

- Technology (internet, AI, blockchain, programming)

- History (Rome, Cleopatra)

- Life advice and emotional support

- Geography, animals, music, weather, sports

- Creative writing

- Short factual answers

Key design choices:

- Every response uses pirate vocabulary: "arr", "matey", "ye", "me" (instead of "my"), "aye", "avast", "ahoy"

- Nautical metaphors woven into explanations (gravity = invisible anchor, DNA = treasure map)

- The model should still be helpful and accurate — just pirate-flavored

- Mixed response lengths: some short ("Four, matey!"), some long (multi-paragraph recipes)

Split: 43 train / 5 validation / 6 test (80/10/10)

Format: JSONL with messages array (chat format):

{"messages": [{"role": "user", "content": "..."}, {"role": "assistant", "content": "..."}]}Step 4: LoRA Fine-Tuning

Timestamp: 2026-04-06 10:06:23 (start) → 10:07:54 (end) Training time: 91 seconds

mlx_lm lora \

--model mlx-community/gemma-4-e2b-it-4bit \

--data pirate-data \

--train --test \

--batch-size 1 \

--num-layers 8 \

--iters 200 \

--learning-rate 1e-4 \

--steps-per-report 10 \

--steps-per-eval 50 \

--adapter-path pirate-adapter \

--save-every 50 \

--max-seq-length 2048 \

--mask-promptHyperparameter choices:

batch-size 1: Small dataset, no need for larger batchesnum-layers 8: Fine-tune the last 8 transformer layers (of 35 total)iters 200: Enough to learn the style without too much overfittinglearning-rate 1e-4: Standard LoRA learning ratemask-prompt: Only compute loss on the assistant response, not the user prompt — teaches the model what to say, not what questions look like

Trainable parameters: 3.6M out of 4,647M (0.078%) — that's the beauty of LoRA. We're training less than one-tenth of one percent of the model's parameters.

Training curve

| Iter | Train Loss | Val Loss | Notes |

|---|---|---|---|

| 1 | — | 5.176 | Starting point |

| 10 | 4.352 | — | |

| 20 | 2.699 | — | Fast initial drop |

| 30 | 2.474 | — | |

| 50 | 1.742 | 2.577 | Best val loss |

| 100 | 0.408 | 3.130 | Val rising — overfitting begins |

| 150 | 0.199 | 3.386 | |

| 200 | 0.098 | 3.264 | Train near-zero, val plateaued |

Peak memory: 4.0 GB — well within our 24GB. Throughput: ~270 tokens/sec.

Key observation: Classic overfitting pattern. Val loss bottomed at iter 50, then train loss kept dropping while val loss rose. This is expected with only 43 training examples — the model memorizes the training data. But for style transfer, some overfitting is actually fine — we want it to strongly adopt the pirate voice.

Test loss: 3.419 (perplexity 30.6)

Adapter size: 70MB (stored as pirate-adapter/adapters.safetensors)

Step 5: Testing the Pirate Model

Timestamp: 2026-04-06 10:08

Without sampling parameters (greedy decoding)

Results were mixed:

- Great: "The capital of France be the city of Paris" ✓

- Great: "Arr, gather 'round and I'll tell ye about gravity..." ✓

- Bad: The joke response degenerated into "Har har har har har har..." repeated hundreds of times

This repetition is a classic issue with small models + small datasets + greedy decoding.

With temperature + min_p sampling

Added temp=0.7, min_p=0.05:

Q: Tell me a joke.

A: Arr, here be one fer ye! Why couldn't the pirate play cards?

Because he was standin' on the deck! Har har har! ...Aye, me

jokes be terrible, but at least they be free, arr!

Q: What is the capital of France?

A: The capital of France be the city of **Paris**. She is the

capital and largest city of France, matey!

Q: What is blockchain?

A: Avast! Blockchain be like a ship's log that everyone on the

crew has a copy of, and nobody can change! [full accurate

explanation with pirate metaphors]

Q: How do I make pasta?

A: Arr, pasta making be the mark of a true cook! [detailed recipe

with "The pasta water should taste like the sea" — nice touch]The sampling parameters completely fixed the repetition issue. Temperature 0.7 adds just enough randomness to prevent the model from getting stuck in loops, while min_p keeps it from going off the rails.

Verdict: It works! The model consistently uses pirate vocabulary, nautical metaphors, and maintains the pirate character while still being helpful and accurate. The style transfer is thorough — even unseen topics get the full pirate treatment.

Step 6: Attempted GGUF Export for Ollama

Timestamp: 2026-04-06 10:10–10:20

This is where things got bumpy. I wanted to export the model to GGUF format so it could run in ollama like any other model.

Attempt 1: Python gguf package + convert_hf_to_gguf.py

- Installed

gguf 0.18.0andllama-cpp-python convert_hf_to_gguf.pyneeded PyTorch → installed CPU-only torch- Failed:

AttributeError: MODEL_ARCH has no attribute 'GEMMA4'— the gguf library doesn't know about Gemma 4 yet, even from the latest llama.cpp main branch

Attempt 2: ollama create from safetensors

- Fused the LoRA adapter into the base model (2.5GB MLX safetensors)

- Dequantized from 4-bit to bf16 (8.7GB) since ollama needs full-precision weights for its own quantization

ollama create pirategemma -f Modelfile— it worked! Ollama accepted the model and created a 9.3GB GGUF- But: Running it crashed with a nil pointer dereference in

Embedding.Forward

The crash is likely because the MLX model only has the text decoder, not the full multimodal stack (vision + audio encoders) that ollama's Gemma 4 implementation expects. The weights converted fine, but at inference time it tried to use a nonexistent embedding layer.

Resolution

Cleaned up the failed intermediate files (~11.2GB) and accepted that GGUF export isn't ready for Gemma 4 yet. The model runs great through MLX. Created run-pirate.sh as a simple interactive chat script.

Artifacts

| File | Size | Purpose |

|---|---|---|

pirate-adapter/adapters.safetensors |

70MB | The LoRA adapter weights (the magic) |

pirate-adapter/adapter_config.json |

<1KB | LoRA configuration |

pirate-data/ |

60KB | Training dataset (54 examples) |

pirate-venv/ |

900MB | Python venv with MLX + dependencies |

run-pirate.sh |

1KB | Interactive chat script |

~/.cache/huggingface/.../gemma-4-e2b-it-4bit/ |

3.4GB | Base model (HF cache) |

Total disk used: ~4.4GB (mostly the base model in HF cache)

What I Learned

Things that went well

- MLX is excellent for Apple Silicon fine-tuning. The entire training took 91 seconds, used only 4GB of memory, and required minimal configuration. The

mlx_lm loraCLI is beautifully simple. - LoRA is remarkably efficient. Training 0.078% of parameters was enough for a complete style transformation. The adapter is only 70MB.

- Small datasets work for style transfer. 43 training examples was enough to thoroughly pirate-ify the model. Style is more about how things are said than what is said, so the model's existing knowledge stays intact.

- The mlx-community model being ungated saved a lot of friction. No HuggingFace token, no license agreement clicking.

Things that went wrong

- Gemma 4 is too new. Both mlx-lm (released version) and the gguf Python library didn't support it. Had to install mlx-lm from GitHub HEAD.

- GGUF export is broken for Gemma 4. Neither the llama.cpp converter nor ollama's import could produce a working GGUF. The gguf library lacks the architecture definition, and ollama's import crashes on the incomplete multimodal model.

- Greedy decoding + small dataset = repetition loops. The joke response degenerated into infinite "har har har" without sampling parameters. Solved with temp=0.7 + min_p=0.05.

- Overfitting was immediate. Val loss bottomed at iter 50 of 200. More data or regularization (dropout, weight decay) would help if we wanted a more generalizable model.

If I did it again

- More diverse training data — 100-200 examples would reduce overfitting

- Include multi-turn conversations — current data is all single-turn

- Try DPO/preference training — "pirate response" vs "normal response" pairs might give cleaner results

- Wait for GGUF tooling to catch up to Gemma 4 — then export to ollama would just work

- Experiment with num-layers — 8 worked fine, but trying 4 or 16 might be interesting

The numbers

- Setup time: ~2 minutes (venv creation, pip install, model download)

- Dataset creation: Handwritten, but a script generates the JSONL splits

- Training time: 91 seconds for 200 iterations

- Total end-to-end: ~15 minutes including all troubleshooting

- Cost: $0 (all local, open-source model, open-source tools)

How to Run It If You Followed These Steps

Save this file to pirate.sh (replace with your path)

#!/bin/bash

# Run the pirate Gemma model interactively

cd /Users/robertviragh/pirate

source pirate-venv/bin/activate

python3 -c "

from mlx_lm import load, generate

from mlx_lm.sample_utils import make_sampler

print('Loading pirate Gemma 4 e:2B...')

model, tokenizer = load('mlx-community/gemma-4-e2b-it-4bit', adapter_path='pirate-adapter')

sampler = make_sampler(temp=0.7, min_p=0.05)

print('Ready! Type your message (Ctrl+C to quit).\n')

while True:

try:

user_input = input('You: ')

if not user_input.strip():

continue

chat = [{'role':'user','content': user_input}]

prompt = tokenizer.apply_chat_template(chat, add_generation_prompt=True, tokenize=False)

result = generate(model, tokenizer, prompt=prompt, max_tokens=300, sampler=sampler)

print(f'\nPirate: {result}\n')

except KeyboardInterrupt:

print('\nFair winds, matey!')

break

"Then run:

# Interactive chat

./run-pirate.shOr manually:

source pirate-venv/bin/activate

python3 -c "

from mlx_lm import load, generate

from mlx_lm.sample_utils import make_sampler

model, tokenizer = load('mlx-community/gemma-4-e2b-it-4bit', adapter_path='pirate-adapter')

sampler = make_sampler(temp=0.7, min_p=0.05)

chat = [{'role':'user','content':'Tell me about black holes'}]

prompt = tokenizer.apply_chat_template(chat, add_generation_prompt=True, tokenize=False)

print(generate(model, tokenizer, prompt=prompt, max_tokens=300, sampler=sampler))

"Conclusion

We've now fine-tuned Gemma 4 on our own hardware.

Written April 6, 2026. Arr.